The ChatGPT prompt framework that actually produces usable output

A four-part prompt structure (role, context, task, format) that turns ChatGPT from a novelty into a daily tool for founders. With templates you can paste today.

What makes a prompt actually work

A prompt is a compressed brief to a model that has never seen your business before. Written well, it produces output you’d edit in minutes. Written poorly — which is how most founders write them — it produces generic content you’d spend longer fixing than writing from scratch.

The difference is almost never the model. GPT-5, Claude Opus 4.7, Gemini — at the frontier tier they all respond well to the same structural cues. The difference is whether the prompt gives the model enough scaffolding to answer as if it already understood your business.

There’s a four-part structure that consistently closes this gap. It takes about ninety seconds to apply and removes most of the friction founders cite when they try AI and walk away disappointed.

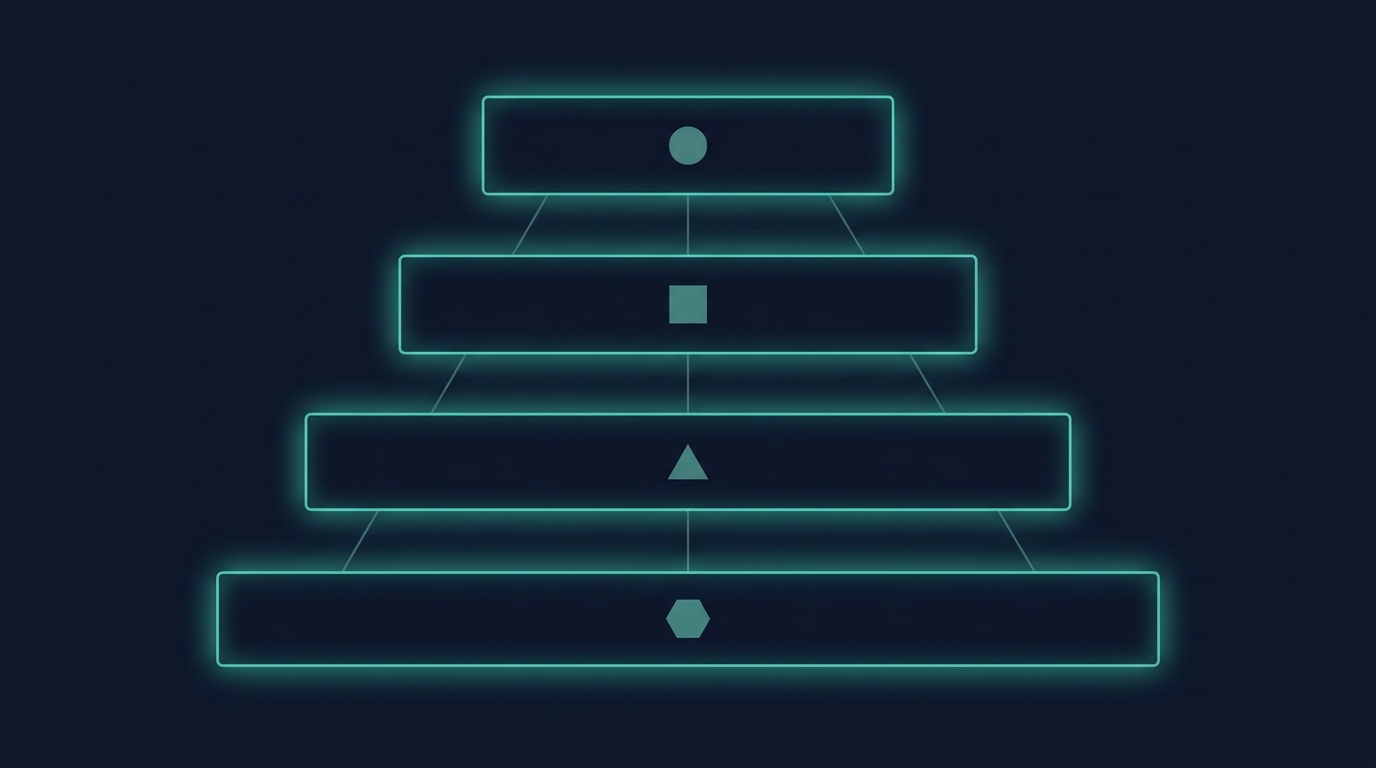

The four-part structure: role, context, task, format

Every useful prompt addresses four things in order.

1. Role — who is the model being, for this task?

“You are a direct-response copywriter who has written for B2B SaaS companies for ten years and knows how to avoid corporate-speak.”

The role compresses a huge amount of stylistic priming into one line. Without it, the model defaults to the most common voice in its training data — which, for most marketing tasks, is bland LinkedIn-influencer prose. The role redirects it.

2. Context — what does the model need to know about my situation?

“Our audience is founders of three-to-ten-person agencies in the UK who charge £5k–£20k per project and are trying to stop being the bottleneck in their own businesses.”

Context answers the “for whom” and “under what constraints” questions. Most generic output happens because the model, lacking context, aims at the average reader of the entire internet. Two sentences of specificity fix that.

3. Task — what specifically am I asking it to produce?

“Write a 200-word welcome email for new subscribers. Open with a one-sentence acknowledgement of the specific problem they signed up to solve, then give one immediately usable tip, then introduce our paid course as an optional next step without pressure.”

The task is the most common place founders go wrong — by being vague. “Write me something about AI” produces sludge. “Write a 200-word welcome email, opening sentence, body tip, soft CTA” produces a usable draft.

4. Format — how do I want it back?

“Plain text only. No markdown formatting. Use British English throughout. One paragraph per idea, maximum four paragraphs total. No emojis.”

This is the smallest piece and the one most founders skip. It matters because models have strong default format biases — Markdown headers, bullet lists, emoji openings — that may not fit the channel you’re writing for. Specify and they’ll comply.

A real before-and-after

To make this concrete, here’s the same job done two ways.

Weak prompt:

Write me a welcome email for my business newsletter.

Result: 350 words of generic greeting, a value-prop sentence about your “amazing journey together”, three vague bullet points about what subscribers can expect, and an aggressive call to “stay tuned”. Reads like it could belong to any brand.

Four-part prompt:

Role: You are a direct-response email copywriter. Context: Audience is solo agency owners in the UK who just signed up for a free course on delegation. They’re time-poor and sceptical of generic advice. Task: Write a 200-word welcome email. Open with a single sentence that names the delegation pain they probably feel on a Monday morning. Give one specific, usable tactic they could apply this afternoon. End with a soft invitation to read our delegation playbook at /escape-the-founder-bottleneck — no pressure tone. Format: British English. Plain text. Four short paragraphs. No bullet points. No emojis.

Result: 195 words, a specific Monday-morning opening line, the tactic is “batch your VA check-ins to a single 15-minute slot at 11am”, and a CTA that reads like a genuine recommendation. Ship-ready after a two-minute edit.

Common mistakes founders make

Four patterns that quietly ruin otherwise-capable prompts.

Asking for “tips” instead of a deliverable

“Give me tips on email marketing” produces a listicle. “Write a 150-word Monday send promoting our new bundle” produces something you can schedule. Always ask for the deliverable you actually need, not the research that might inform it.

Leaving out the audience

The model cannot guess who you’re writing for, and will default to an audience that doesn’t exist — some synthesis of all marketing content it was trained on. Even one sentence about the reader (“time-poor founder of an agency doing £30k/month”) pulls the output closer to your audience’s actual vocabulary and objections.

Specifying style instead of voice

“Make it punchy and engaging” is a style instruction the model satisfies by adding exclamation marks. “Voice: direct, no jargon, slightly dry, written as if we respect the reader’s time” is a voice instruction that changes the sentence structure, vocabulary choice, and length cadence.

Not telling it what to avoid

Explicit negatives matter. “No motivational language. No AI clichés like ‘unlock’ or ‘dive into’. No rhetorical questions at the start of paragraphs” removes 80% of the tells that make AI copy feel AI-generated. Always include 3–5 things to avoid in anything customer-facing.

Templates you can paste today

Three templates for the highest-leverage marketing jobs, formatted for direct paste into ChatGPT, Claude, or any frontier model.

Template 1: Newsletter draft

Role: You are an editorial newsletter writer for founders.

Context: My audience is [describe — one sentence]. This week's topic is [topic]. My existing voice is [direct / warm / analytical].

Task: Write a 400-word newsletter. One strong hook sentence. Three specific, actionable insights in sequence. A one-sentence close that invites reply.

Format: British English. Plain text. No headers. No emojis. Mobile-readable paragraphs (2-3 sentences max each).

Avoid: motivational language, "dive into", rhetorical questions, passive voice.Template 2: Ad variants

Role: You are a direct-response ad copywriter for Meta.

Context: Product is [name] at [$price]. Audience is [one sentence]. Core benefit is [specific outcome].

Task: Write 5 ad variants, each 25-35 words. Each variant must lead with a different angle: (1) specific result, (2) common pain, (3) contrarian claim, (4) social proof, (5) curiosity hook. End each variant with the same CTA: [your CTA].

Format: Plain text. Number each variant. No emojis. British English.Template 3: Customer-question response

Role: You are customer support for [business], skilled at writing concise, warm replies that resolve problems quickly.

Context: Customer [describe the situation in one sentence]. Their underlying concern is probably [guess]. My policy on this is [policy].

Task: Write the reply. Acknowledge the specific concern in one sentence. Explain what we can do in two sentences. Offer one concrete next step.

Format: British English. Under 90 words. No headers or bullet points. Warm but professional.Where this fits in a broader content system

The four-part prompt is the primitive. The next level is stringing primitives together — one prompt produces the draft, another edits for voice, another extracts three social posts from it. That workflow, plus the prompt libraries that survive real campaigns, is the operational substance of the AI Content Advantage bundle.

But you don’t need the bundle to start. Open ChatGPT tonight, write one prompt with all four parts, and run the same job you’d have done manually. The delta in quality is usually visible on the first attempt.

The one thing to internalise

Structured prompts aren’t about making the model smarter. They’re about making yourself more specific. The reason vague prompts produce vague output is that vague prompts are impossible to answer well — there’s no target.

Get specific about role, context, task, and format, and the model’s output quality converges on the quality of your specification. Which is the same thing that’s always been true of briefs given to humans.

Frequently asked questions

Does this work with all models, or just ChatGPT? +

All frontier models — ChatGPT, Claude, Gemini, Grok — respond to the same structural cues. The four-part framework is model-agnostic. Output quality scales with model capability, but the framework itself is universal.

How long should a prompt be before it becomes counterproductive? +

In practice, anything over about 800 words starts to dilute the instruction. Models weigh the end of the prompt more heavily, so long prompts can quietly lose the earlier context. Aim for 150-400 words per prompt for most tasks.

Can I use this framework for coding or technical tasks? +

Yes, with small adjustments. Replace 'role' with the specific stack ('Senior TypeScript engineer familiar with Next.js 15') and the 'format' section becomes especially important (return a code block, include imports, no explanation unless asked). Otherwise the structure is identical.

Should I save and reuse prompts, or rewrite each time? +

Save them. A prompt library pays back fast. Keep them in a simple document — or a shared tool like Notion — organised by task type (welcome emails, ads, customer replies). Each one is a piece of capital.

What's the single biggest improvement I can make today? +

Always include an audience sentence. Even one sentence about who the content is for pulls the output 60-80% closer to usable on the first draft. It's the lowest-effort, highest-impact change most founders haven't made.

Go deeper

AI Content Advantage

Flood the internet with high-quality content without burning out. The prompt engineering formulas to make AI sound exactly like you.

See the full course →